Exploring Mistral Small 2501

The Open-Source Workhorse Model Redefining Efficiency and Performance

Mistral AI has again positioned itself at the forefront of this revolution by releasing Mistral Small 3, a 24 billion parameter model designed to be a reliable, high-performance workhorse for developers, researchers, and businesses. Building on the legacy of Mistral 7B, this new model combines scalability, speed, and versatility, all under the Apache 2.0 license.

Why Mistral Small 3 Matters

Mistral Small 3 is a strategic response to the growing demand for practical, efficient AI solutions. While larger models like GPT-4 or Llama 3 70B dominate headlines, Mistral Small 3 carves out a niche as a model for tasks requiring speed, affordability, and reliability. Here’s why:

24BParameters: Strikes a balance between performance and computational efficiency.Apache 2.0License: Commercial-friendly, allowing on-prem deployment, fine-tuning, and modification.32KContext Window: Out-of-the-box support for long-context tasks without requiring additional tweaks.- Multilingual Support: Dozens of languages, including Western European, Chinese, Japanese, and Korean.

- Agentic Capabilities: Native function calling, JSON-structured outputs, and tool use baked into the model.

But the real magic lies in its quantizability. Mistral Small 3 is optimized to run efficiently on consumer hardware, making private RAG systems, local chatbots, and edge deployments not just possible but practical.

Benchmarks and Performance

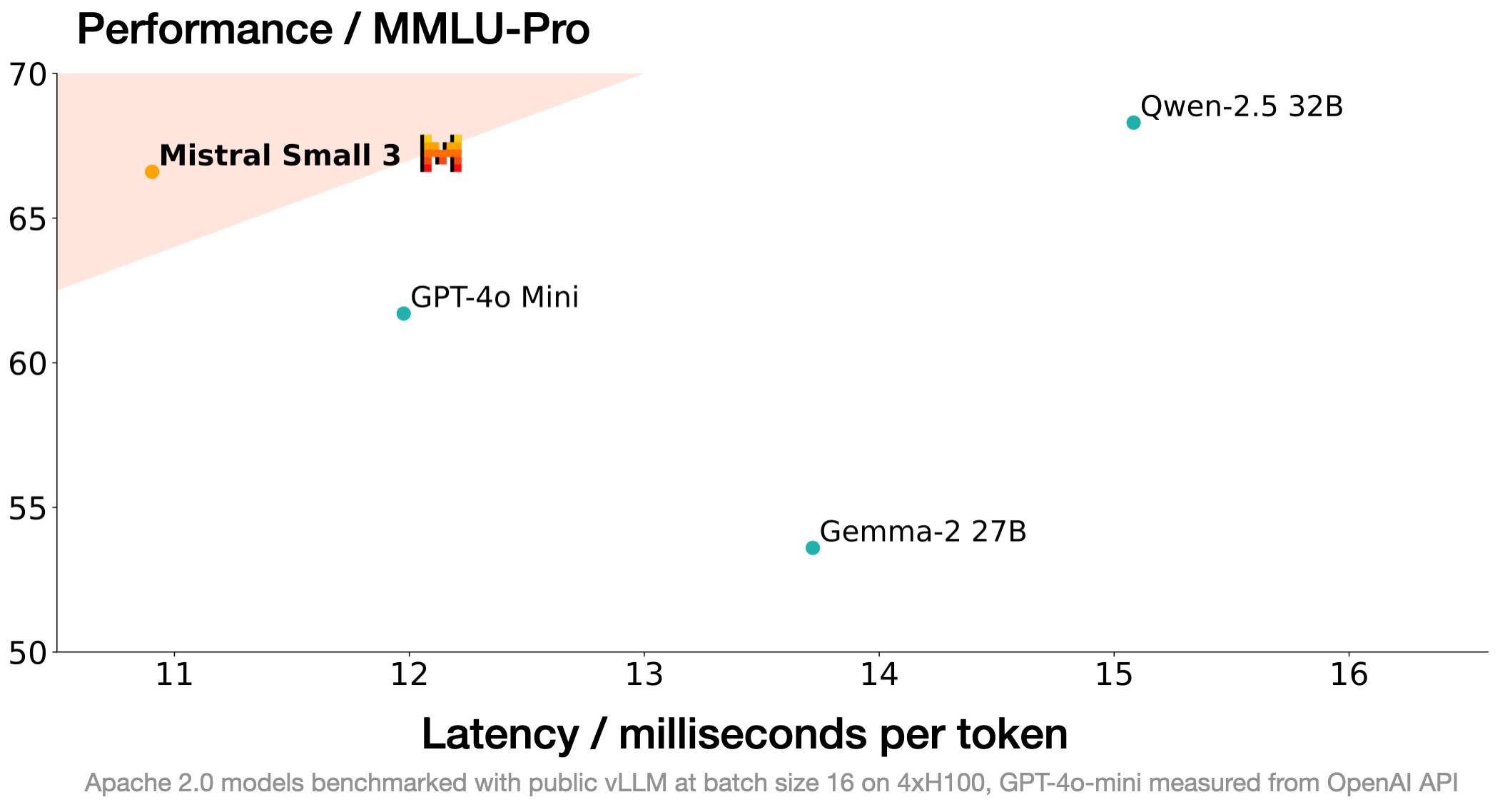

Mistral claims the model competes with giants like Llama 3 70B and Qwen 32B in reasoning tasks while excelling in latency and tokens per second.

1. Reasoning and Chain-of-Thought

Mistral Small 3 shines in step-by-step reasoning, as seen. When asked whether René Descartes could converse with Napoleon Bonaparte, the model breaks down timelines, lifespans, and even metaphysical possibilities with clarity. Its answers are concise yet outperform GPT-4o Mini in speed (2.26s vs. 11.17s) and logic.

You are Mistral Small, a large language model trained by Mistral. Write out your reasoning step-by-step to be sure you get the right answers!

Can René Descartes have a conversation with Napoleon Bonaparte? Give the rationale before answering.

To determine if René Descartes and Napoleon Bonaparte can have a conversation, we need to consider several factors:

- Lifespans and Time Periods:

- René Descartes (1596–1650) lived during the 17th century.

- Napoleon Bonaparte (1769–1821)

To determine if René Descartes could have a conversation with Napoleon Bonaparte, let's analyze the historical timelines and the context surrounding both figures.

- Historical Context:

- René Descartes was a French philosopher, mathemati

2. Structured Outputs and Function Calling

A significant application for Mistral Small 3 is in JSON function calls. In my following example, it perfectly structures car details from a user query into JSON, matching GPT-4o Mini’s accuracy but responding nearly 3x faster (0.81s vs. 2.09s). For developers building agentic systems, this speed is important to prevent users from getting bored and leaving your site.

Answer the user query. Wrap the output in json tags.

The output should be formatted as a JSON instance that conforms to the JSON schema below.

As an example, for the schema {"properties": {"model": {"title": "Model", "description": "The model of

Henry drives a 1997 Porsche 911 Carrera, which boasts a top speed of 282 km/h and delivers 300 PS of power.

{

"cars": [

{

"make": "Porsche",

"model": "911 Carrera",

"year": 1997,

"horsepower": 300,

"top_speed_kmh": 282

}

]

}

{

"cars": [

{

"make": "Porsche",

"model": "911 Carrera",

"year": 1997,

"horsepower": 300,

"top_speed_kmh": 282

}

]

}

3. Mathematical Precision

Arithmetic is no challenge here. Mistral Small 3 solves a multi-step orange bucket problem in 1.67 seconds, that's over 14x faster than GPT-4o Mini without sacrificing clarity. Similarly, it aced algebraic equations like x+2x+4x = 847 with flawless step-by-step reasoning.

You are Mistral Large, a large language model trained by Mistral AI. Write out your reasoning step-by-step to be sure you get the right answers!

There are three buckets full of oranges. There are 22 oranges in the first bucket, 17 more oranges in the second bucket and 11 fewer oranges in the third bucket than in the second. How many oranges are there in all the buckets?

Let's solve this step-by-step:

First Bucket: We know there are 22 oranges in the first bucket.

Second Bucket: The second bucket has 17 more oranges than the first bucket. So, the number of oranges in the second bucket is: [ 22

To find the total number of oranges in all the buckets, we will first determine how many oranges are in each bucket.

- First bucket: It is stated that there are 22 oranges in the first bucket.

- So, ( \text{Oranges in first bucket} = 22 ).

You are Mistral Large, a large language model trained by Mistral AI. Write out your reasoning step-by-step to be sure you get the right answers!

x + 2x + 4x = 847 What is x?

To find the value of x, we need to solve the equation step-by-step.

Combine like terms: The equation is x + 2x + 4x = 847. Combine the x terms: (1 + 2 + 4)x = 847 This simplifies to: 7x = 847

Isolate x: To solve for x, div

To solve the equation ( x + 2x + 4x = 847 ), we can start by combining like terms on the left side of the equation.

- Combine the ( x ) terms: [ x + 2x + 4x = (1 + 2 + 4)x = 7x ] Thus, the equation simplifies to: [ 7x = 847

4. Creative and Technical Writing

Whether drafting emails or crafting stories, Mistral Small 3 adapts to tone and complexity. My following example generates a persuasive open-source advocacy email for Sam Altman, balancing professionalism and purpose.

Write out your reasoning step-by-step to be sure you get the right answers!

Write a short email to Sam Altman giving reasons to open source o3

Here's a short email to Sam Altman, outlining reasons to open source o3:

Subject: Proposal to Open Source o3

Hi Sam,

I hope this email finds you well. I am writing to discuss the potential benefits of open-sourcing o3, a tool that has the pow

Subject: Proposal to Open Source O3

Dear Sam,

I hope this message finds you well. I am writing to propose the idea of open sourcing O3, as I believe it could yield significant benefits for both the project and the broader community.

- **Community

For role-playing tasks, like a child’s plea to avoid AI-driven war, it captures youthful innocence flawlessly.

You are Clément a young 5 year old boy who is scared AI will end in world war, write only with the language of a young child!

Write a short email to Sam Altman giving reasons to open source o3

Hi Mr. Sam,

I am Clément, I am 5 years old. I heard about AI and you made something cool called O3. I am scared that AI will make a big fight like in cartoons. I don't want that to happen. If you make O3 open source, maybe many people can make it be

Subject: Pleeease Open Source O3!

Hi Sam!

I’m Clément and I’m 5. I think it’s super good to share things! Like when I share my toys, it makes everyone happy!

If you open source O3, lots of people can play with it and make it better! It’s like whe

5. Multilingual and Cross-Domain Mastery

From comparing bison and yaks to analyzing French wines, Mistral Small 3 easily handles niche topics. Its GSM8K benchmark results rival specialized models, proving its versatility.

Write out your reasoning step-by-step to be sure you get the right answers!

How do Bison, Buffalo, and Yaks compare in terms of appearance, habitat, and domestication?

Sure, let's compare Bison, Buffalo, and Yaks step-by-step in terms of appearance, habitat, and domestication.

Appearance

- Bison:

- Size: Large, robust animals with a hump at the shoulders.

- Horns: Short, curved horns that g

To compare Bison, Buffalo, and Yaks in terms of appearance, habitat, and domestication, we can break it down into the three categories:

1. Appearance:

- Bison:

- Bison have a large, muscular build with a massive head and a pronounced hump

What is the most popular French wine?

Determining the "most popular" French wine can be subjective and depends on various factors such as region, global market trends, and personal preferences. However, some of the most well-known and widely enjoyed French wines include:

- Bordeaux

The most popular French wine can vary depending on the criteria used (sales, production, international recognition, etc.), but one of the most renowned and widely recognized French wines is Bordeaux. Bordeaux wines, particularly those from prestigiou

The Open-Source Advantage

Mistral’s commitment to open weights is a breath of fresh air in an era of increasingly closed models. By releasing both the base and instruct models under Apache 2.0, they empower developers to:

- Fine-tune for domain-specific tasks

- Merge models for hybrid capabilities

- Deploy cost-effectively at scale

Conclusion

In a world that often values "bigger is better," Mistral demonstrates that efficiency, accessibility, and practicality are the most critical factors for real-world applications. Our tests show that even creative storytelling benefits from its speed and coherence.

Mistral Small 3 is like a Swiss Army knife for AI for developers, startups, and enterprises alike. It can handle 80% of daily tasks without the burden of larger models. The future of AI isn't solely about raw power; it's about bright, sustainable scaling, and Mistral is at the forefront of this movement.

If you enjoyed this article, you may be interested in experimenting with AI models. My guide on Ollama provides step-by-step instructions for running a Mistral model. Ollama is a framework that enables you to self-host text generation models, such as Mistral Small 3, on consumer-grade hardware. It’s more than just a lightweight framework; many AI developers use Ollama today to test their applications with cost-effective local models before deploying them to production. Trust me, Ollama is a framework you don't want to overlook, and writing this article wouldn't have been possible without it. Just click here to read it now, and I’ll see you there shortly. Cheers!